This Article on Central Limit Theorem Covers Explanations for a Layman, a Kid, a Student, a College Graduate and a Mathematician

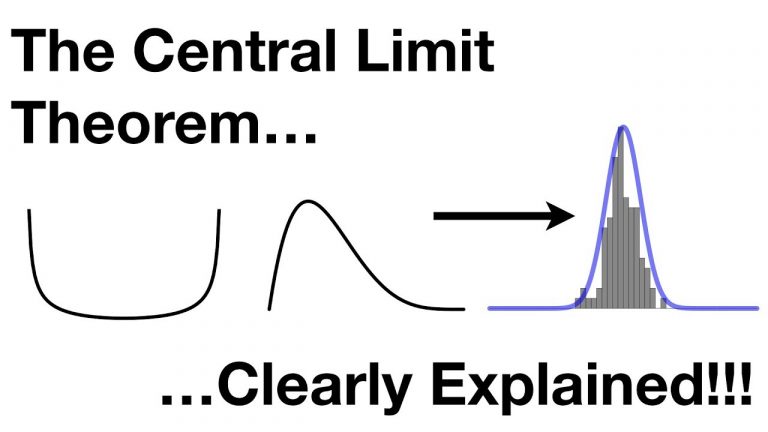

The Central Limit Theorem (CLT) is like a special rule in the world of numbers and data. It helps us understand how groups of numbers behave when we take their averages. Imagine you’re collecting information from lots of people about their heights. People’s heights can be all over the place, some are tall, some are short, and everything in between. Now, let’s say you take groups of people, maybe 10 at a time, and find the average height in each group.

The Central Limit Theorem says something fascinating: No matter what the original heights look like (whether they’re a jumble of different heights or follow some unusual pattern), if you gather enough groups and calculate their average heights, those averages will look like a special kind of curve that we call a “bell curve” or “normal distribution.” This bell curve is a smooth, rounded shape that’s really common in nature.

So, why is this Significant?

It helps us make predictions about the whole group of people based on these average heights. Even if the original heights didn’t follow a nice pattern, these averages tend to behave in a predictable way. This is super handy for statisticians, the people who work with numbers to understand things better. The Central Limit Theorem (CLT) has numerous practical applications across various fields. Here are 11 detailed examples of how the CLT is applied:

1. Polling and Elections: When predicting election outcomes, pollsters often collect data from different groups of voters. By averaging these results, they can use the CLT to estimate the likely distribution of votes and the level of uncertainty in their predictions.

2. Quality Control: In manufacturing, companies sample a batch of products to check for defects. The CLT allows them to estimate the overall quality of the entire batch based on the average quality of the samples.

3. Financial Markets: When analyzing stock returns or other financial data, the CLT helps to make assumptions about the distribution of returns, which is important for risk assessment and portfolio management.

4. Biological Studies: Researchers use the CLT to analyze biological data, such as measuring the heights or weights of organisms within a population, to draw conclusions about the overall characteristics of that population.

5. Educational Testing: When designing standardized tests, test makers collect data from a sample of students to establish norms. The CLT assists in understanding how the test scores might be distributed among all students who take the test.

6. Medical Research: Clinical trials involve analyzing data from a sample of participants to make inferences about the entire population. The CLT helps researchers estimate parameters like average response to a treatment or the occurrence of side effects.

7. Marketing and Consumer Behavior: Companies analyze customer behaviors, such as purchase amounts or website clicks, using the CLT to understand the overall patterns and make marketing decisions based on the averages.

8. Social Sciences: Sociologists and researchers studying social phenomena often collect data from different groups. The CLT allows them to make conclusions about the entire population’s behaviors or attitudes.

9. Economics and Policy Analysis: Economists use the CLT to analyze economic data, such as income distribution or unemployment rates, to better understand the overall economic landscape.

10. Environmental Studies: Environmental scientists use the CLT to analyze data from various samples to estimate parameters like pollution levels, species diversity, and habitat health within a larger ecosystem.

11. Sports Statistics: Sports analysts use the CLT to understand and predict player performance based on averages derived from different games or seasons.

In each of these applications, the CLT allows analysts, researchers, and decision-makers to draw meaningful conclusions from sample data about larger populations. It provides a bridge between the behavior of small groups and the behavior of entire populations, making statistical analysis more accurate and reliable.

Central Limit Theorem (CLT) Simplified for Everyone – a Layman, a Kid, a Student, a College Graduate & a Mathematician

For a Layman: The Central Limit Theorem is like a magical rule in statistics. Imagine you’re flipping a coin many times and recording the results. If you do it a lot of times, no matter what kind of coin you have, the average of all those results will start to look like a nice, smooth curve. This curve is called a “bell curve” or “normal distribution.” It’s like nature’s way of making things balance out, even if individual results are a bit scattered. This idea helps scientists and researchers understand things better.

Example for a Layman: Think about guessing how many jellybeans are in a jar. If a lot of people guess and you average all those guesses, you’ll probably get a number close to the actual amount. Even if some guesses are too high or too low, when you put them all together, they tend to form a nice bell-shaped curve.

For a Kid: Okay, so imagine you have a bunch of different dice, some with lots of sides and some with just a few. Now, you roll each die a bunch of times and write down the numbers you get. If you add up all the numbers from each roll and then do this whole thing over and over, you’ll notice that the total you get starts to look like a special mountain-shaped curve. This is the Central Limit Theorem’s magic – it says that no matter the dice you used, that mountain will appear when you add up the numbers a lot of times.

Example for a Kid: Picture playing a game where you roll different dice and add up the numbers. Even if you use dice with weird shapes or different sides, when you play the game many, many times, the total will often land close to a certain number most of the time. It’s like the game’s way of making everything even.

For a Student: The Central Limit Theorem is a powerful concept in statistics. It states that if you collect a lot of random samples from any population, add up the values in each sample, and then graph those sums, the resulting graph will resemble a bell-shaped curve known as a normal distribution. This distribution tends to emerge regardless of the original shape of the population’s data. This theorem is fundamental because it lets us understand how averages from various samples tend to behave, which is crucial for making statistical inferences.

Example for a Student: Imagine you’re measuring the heights of students in different classes. Each class might have its own unique height distribution. However, if you take the average height from each class and repeat this process for many classes, you’ll find that the distribution of these average heights forms a bell curve, which is the Central Limit Theorem in action.

For a College Graduate: The Central Limit Theorem is a fundamental concept in statistics that sheds light on the behavior of sample averages. It states that regardless of the underlying distribution of a population, when we collect and average a large number of random samples from that population, the distribution of these sample averages will approximate a normal distribution. This Gaussian-shaped distribution emerges due to the combined effect of the Law of Large Numbers and the inherent randomness in individual samples.

Example for a College Graduate: Consider a manufacturing process that produces parts with varying lengths. Even if the lengths of the individual parts do not follow a normal distribution, the distribution of the sample averages (e.g., average lengths of parts from different production batches) will tend to be normally distributed. This theorem is a cornerstone in statistics, allowing us to confidently apply statistical methods to a wide range of scenarios by relying on the known properties of the normal distribution.

Central Limit Theorem (CLT) Explined to a Mathematician

The Central Limit Theorem is a fundamental concept in probability and statistics that describes the behavior of the distribution of sample means when drawn from a population with any distribution, as long as the sample size is sufficiently large. In other words, when you take multiple random samples from a population and calculate the mean of each sample, the distribution of these sample means will approach a normal distribution, regardless of the shape of the original population distribution. This is a remarkable property that allows statisticians to make inferences about population parameters using methods based on the normal distribution.

Mathematical Statement: If X1, X2, …, Xnare independent and identically distributed random variables with mean μ and finite variance σ², then the distribution of the sample mean (X̄) approaches a normal distribution as the sample size n approaches infinity. The mean of the sample means (E[X̄]) is equal to the population mean (μ), and the standard deviation of the sample means (σ[X̄]) is equal to the population standard deviation (σ) divided by the square root of the sample size (n).

Mathematical Formulas:

- Sample Mean: X̄ = (X1 + X2 + … + Xn) / n

- Mean of Sample Means: E[X̄] = μ (same as population mean)

- Standard Deviation of Sample Means: σ[X̄] = σ / √n (where σ is the population standard deviation)

Derivation: To understand why the CLT works, let’s consider the sum of n independent random variables:

Sn = X1 + X2 + … + Xn

If the random variables have the same mean μ and variance σ², then the mean of the sum Sn is n times the mean μ, and the variance of the sum is n times the variance σ².

Now, let’s define the sample mean X̄:

X̄ = Sn / n

The expected value of the sample mean E[X̄] is:

E[X̄] = E[Sn] / n = (nμ) / n = μ

The variance of the sample mean Var(X̄) is:

Var(X̄) = Var(Sn) / n² = (nσ²) / n² = σ² / n

As n becomes larger, the variance of the sample mean decreases. The standard deviation of the sample mean is the square root of the variance:

σ[X̄] = σ / √n

When n is large, the distribution of X̄ closely approximates a normal distribution by the Central Limit Theorem. This means that if you repeatedly take random samples and calculate their means, the distribution of those sample means will resemble a bell-shaped curve.

In summary, the Central Limit Theorem is a cornerstone of statistics that allows us to confidently use the properties of the normal distribution to make inferences about population parameters based on sample data, even when the underlying population distribution might not be normal. It is a powerful tool for hypothesis testing, confidence intervals, and other statistical analyses.

The Central Limit Theorem (CLT) Subcategories

The Central Limit Theorem (CLT) has a few key subcategories that can help you understand its different aspects. Let’s break them down in simpler terms:

1. Sample Size Matters: The size of the groups you’re averaging, called the sample size, is important. The CLT says that as you take larger and larger sample sizes, the averages of those samples will get closer and closer to following a bell curve. So, bigger sample sizes lead to better adherence to the bell curve behavior.

2. Any Kind of Data Works: The original data you’re dealing with can be all sorts of shapes and patterns. It doesn’t matter if the data doesn’t look like a bell curve by itself. When you gather lots of averages from these data points, the CLT kicks in and transforms those averages into a bell curve.

3. Magic of Averages: The magic of the CLT is in averages. When you average out a bunch of data points in a sample, you’re focusing on the middle ground. The bell curve that appears through the CLT shows how most of the averages are close to the real average of the whole group.

4. Universal Nature of the Bell Curve: The bell curve, or normal distribution, is seen all over nature. It pops up in things like the heights of people, scores in a test, and even measurements like weight. The CLT explains why this curve keeps showing up when we deal with averages.

5. Making Predictions: Because the bell curve appears when we look at averages, statisticians can make predictions about the larger group based on these averages. For example, if you know the average test score of a few classes, you can use the CLT to predict what the average score might be for the entire school.

6. Confidence in Statistics: The CLT is like a safety net for statisticians. It assures them that when they work with averages from different samples, they can rely on the familiar properties of the bell curve. This confidence allows them to use mathematical tools more effectively for understanding and predicting data behavior.

7. Practical Applications: The CLT has practical applications in various fields. From predicting election outcomes based on poll averages to estimating how much time a project might take based on average completion times, the CLT is a handy tool for making informed decisions using averages.

In summary, the Central Limit Theorem is a powerful idea that tells us no matter what kind of data we start with, when we focus on averages from different samples, they tend to form a bell curve. This bell curve helps statisticians make predictions and better understand the behavior of groups of data.

itstitle

excerptsa

cheapest buy enclomiphene purchase uk

cheap enclomiphene generic europe

generique kamagra distribuer ses

generique kamagra pharmacie vente acheter

how to buy androxal cheap mastercard

online order androxal cheap store

buying dutasteride canada price

buy cheap dutasteride generic alternative

how to order flexeril cyclobenzaprine purchase usa

buy cheap flexeril cyclobenzaprine purchase from uk

get gabapentin buy in australia

buy gabapentin generic next day delivery

cheapest price for generic fildena without prescription

cheapest buy fildena purchase online safely

cheap itraconazole buy generic

buying itraconazole cheap from canada

order avodart canada drugs

discount avodart generic united states

buy staxyn without a prescription online

how to buy staxyn canada how to buy

cheap rifaximin uk in store

how to buy rifaximin generic cheapest

online order xifaxan uk buy over counter

Buy xifaxan overnight shipping

kamagra z kanady

koupit nejlevnější kamagra online